Если вы следили за созданием видео с помощью искусственного интеллекта, вы, вероятно, слышали шум вокруг Seedance 2.0 — видеомодели нового поколения от ByteDance, которая покорила Интернет с момента ее выпуска в феврале 2026 года. От гиперреалистичных кинематографических клипов, которые стали вирусными в социальных сетях, до горячих дебатов о будущем создания контента, Seedance 2.0 быстро стала одной из самых обсуждаемых моделей искусственного интеллекта года.

Но что такое Seedance 2.0? Как это работает? И почему это должно волновать авторов, маркетологов и бизнес?

В этом подробном руководстве мы расскажем все, что вам нужно знать о Seedance 2.0 — его основные функции, что отличает его от конкурентов, таких как Sora и Kling, и как вы можете начать использовать его для создания потрясающих видеороликов, созданных с помощью искусственного интеллекта.

Что такое Seedance 2.0?

Seedance 2.0 — это усовершенствованная модель генерации видео с использованием искусственного интеллекта, разработанная исследовательской группой Seed компании ByteDance. Он был официально выпущен 12 февраля 2026 года как преемник популярной модели Seedance 1.5 Pro.

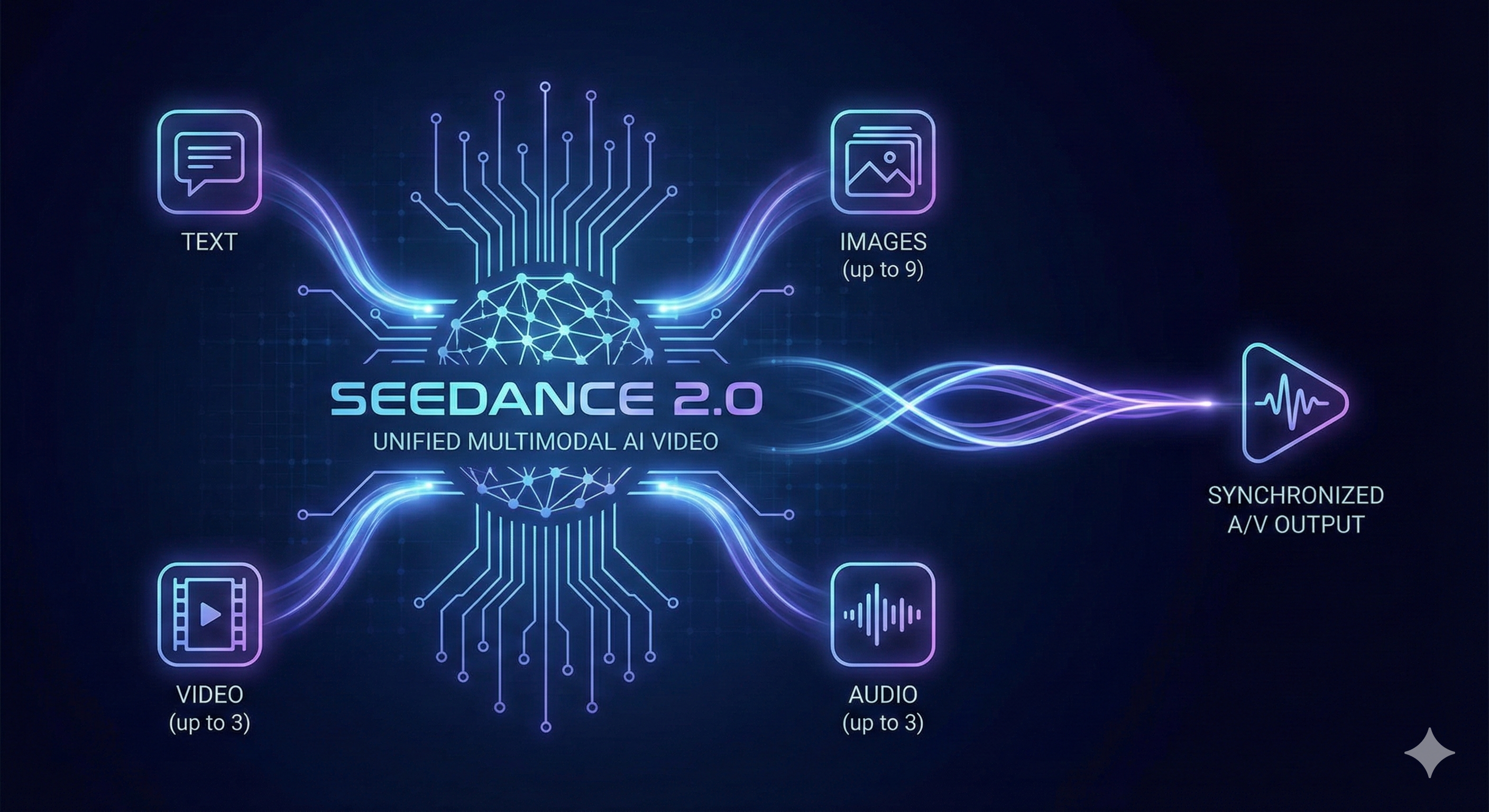

По своей сути Seedance 2.0 использует унифицированную мультимодальную архитектуру совместной генерации аудио-видео. В отличие от большинства видеоинструментов искусственного интеллекта, которые сначала генерируют визуальные эффекты, а затем пытаются наложить поверх них звук, Seedance 2.0 обрабатывает аудио и видео вместе с нуля, в результате чего получаются удивительно естественные и синхронизированные выходные данные.

Модель принимает четыре типа входных модальностей одновременно:

- Текст — опишите сцену с помощью подсказок на естественном языке.

- Изображения — загрузите до 9 эталонных изображений.

- Видео — добавьте до 3 видеоклипов в качестве ссылок.

- Аудио — добавьте до 3 звуковых дорожек для звукового сопровождения.

Эта 4-модальная система ввода — то, что отличает Seedance 2.0 почти от всех других видеогенераторов AI на рынке.

Эволюция Seedance: от 1.0 до 2.0

Чтобы понять, почему Seedance 2.0 так важно, полезно увидеть, как развивалась модель:

Seedance 1.0 (июнь 2025 г.): Исходная модель заложила основу для создания плавного движения, многокадрового повествования, разнообразной стилистической выразительности и точного следования подсказкам в разрешении 1080p.

Seedance 1.5 Pro (декабрь 2025 г.): В этой версии представлена совместная архитектура генерации аудио и видео с аудиовизуальной синхронизацией, поддержкой многоязычных диалектов и улучшенным управлением камерой.

Seedance 2.0 (февраль 2026 г.): В последней модели количество входных модальностей расширено с 2 до 4, представлена эталонная система @ для точного творческого контроля, повышен выходной сигнал до собственного разрешения 2K и скорость генерации на 30 % выше, чем в версии 1.5 Pro.

Переход от Seedance 1.5 Pro к версии 2.0 не просто постепенный — это архитектурный скачок вперед.

Ключевые особенности Seedance 2.0

1. Реалистичное моделирование движения и физики

Одним из наиболее впечатляющих аспектов Seedance 2.0 является его способность генерировать физически точные движения. Модель превосходно передает сложные взаимодействия нескольких человек, замысловатые движения тел и реалистичную физику — от того, как ткань движется на ветру, до того, как фигуристы приземляются после прыжка.

Во внутренних тестах ByteDance (SeedVideoBench-2.0) Seedance 2.0 достиг самых современных результатов в стабильности движения и физической согласованности, превзойдя конкурентов по многим параметрам.

2. Справочная система @Пожалуй, это выдающаяся особенность Seedance 2.0. Справочная система @ позволяет авторам отмечать в приглашении определенные элементы — символы, объекты, стили, звуки — и привязывать их к загруженным справочным материалам.

Например, вы можете написать подсказку типа:

"Девушка @image1 гуляет по музею, художественный стиль отсылает к @image2, фоновая музыка соответствует @audio1"

Это дает создателям детальный контроль над результатами генерации, который ранее был невозможен с помощью простых текстовых подсказок.

3. Выходное разрешение 2K

Seedance 2.0 выводит видео с собственным разрешением 2K (2048×1080 для альбомной ориентации или 1080×2048 для портретной ориентации), что является значительным улучшением по сравнению с пределом 1080p большинства конкурирующих моделей. Более высокое разрешение означает, что мелкие детали, такие как черты лица, наложение текста и текстуры продуктов, отображаются с заметно большей четкостью.

4. Совместное создание аудио и видео

Вместо того, чтобы генерировать беззвучное видео и добавлять звук в последнюю очередь, Seedance 2.0 производит аудио и видео одновременно. Модель оснащена двухканальным стереозвуком, генерирует фоновую музыку, звуковые эффекты окружающей среды, диалоги персонажей и повествование — все это точно синхронизировано с визуальным контентом.

Качество звука удивительно детализировано, точно воспроизводит такие тонкие звуки, как царапанье стекла, шелест ткани и лопание пузырчатой пленки.

5. Редактирование и расширение видео

Seedance 2.0 не просто создает видео с нуля — он также может редактировать существующие фрагменты видео и дополнять видео новым контентом. Вы можете изменить определенные части созданного видео, изменить действия персонажей, скорректировать сюжетные линии или просто продолжить сцену с новыми инструкциями. Этот «контроль на уровне директора» позволяет повторять ваше творческое видение, не начиная заново.

6. До 15 секунд серийного видео

Модель поддерживает генерацию до 15 секунд высококачественного многокадрового видео со звуком за одно поколение. Хотя 15 секунд могут показаться короткими, возможность создания нескольких кадров означает, что модель может автоматически планировать ракурсы камеры, переходы и темп повествования в течение этого периода времени, создавая контент, который кажется гораздо более кинематографичным, чем один статический кадр.

Seedance 2.0 по сравнению с другими видеомоделями ИИ

Чем Seedance 2.0 отличается от других ведущих генераторов видео с использованием искусственного интеллекта? Вот краткий обзор:

| Особенность | Seedance 2.0 | OpenAI Сора | Клинг 3.0 | Взлетно-посадочная полоса Ген-3 |

|---|---|---|---|---|

| Способы ввода | Текст + Изображение + Видео + Аудио | Текст + изображение | Текст + изображение | Текст + изображение |

| Максимальное разрешение | 2К (2048×1080) | 1080p | 1080p | 1080p |

| Генерация собственного аудио | ✅ Двухканальное стерео | ❌ | ✅ | ❌ |

| @ Справочная система | ✅ До 12 файлов | ❌ | ❌ | ❌ |

| Монтаж видео | ✅ | Ограниченная | Ограниченная | ✅ |

| Максимальная продолжительность | 15 секунд | 20 секунд | 15 секунд | 10 секунд |

| Физическая точность | Ведущий в отрасли | Хорошо | Хорошо | Хорошо |

Самыми большими отличиями Seedance 2.0 являются поддержка 4-модального ввода, опорная система @ и встроенная совместная генерация аудио-видео — функции, которые в настоящее время не предлагает ни одна другая модель на том же уровне.

Как использовать Seedance 2.0

По состоянию на февраль 2026 г. Seedance 2.0 доступен на нескольких платформах:1. Jimeng AI (即梦) — творческая платформа ByteDance (веб-версия, выберите модель Seedance 2.0) 2. Приложение Doubao (豆包) — диалоговое приложение искусственного интеллекта ByteDance (выберите Seedance 2.0 в диалоговом окне) 3. Volcano Ark (火山方舟) — корпоративная платформа искусственного интеллекта ByteDance. 4. Сторонние платформы — такие службы, как Seedance2Video.io, обеспечивают легкий доступ к возможностям Seedance 2.0 с удобным интерфейсом, номер телефона на китайском языке не требуется.

Для международных пользователей, которые могут столкнуться с препятствиями при прямом доступе к приложениям ByteDance на китайском рынке, сторонние платформы предлагают наиболее удобный способ испытать мощные функции Seedance 2.0.

Кому следует использовать Seedance 2.0?

Seedance 2.0 предназначен для широкого спектра случаев использования:

- Создатели контента и влиятельные лица — создавайте привлекательные видеоролики для социальных сетей, короткометражные фильмы и визуальные истории с минимальными усилиями и затратами.

- Маркетологи и рекламодатели. Создавайте демо-версии продуктов профессионального качества, фирменные видеоролики и рекламные материалы без дорогостоящих производственных съемок.

- Кинематографисты и аниматоры. Используйте искусственный интеллект для создания прототипов сцен, создания визуальных эффектов или создания концептуальных видеороликов, прежде чем переходить к полному производству. – Компании электронной коммерции. Создавайте видеоролики с демонстрациями продуктов, которые выглядят так, будто их сняла профессиональная команда.

- Разработчики игр — создавайте кинематографические трейлеры, кат-сцены и рекламные материалы.

Полемика: проблемы авторского права и дипфейков

Стоит отметить, что релиз Seedance 2.0 не обошёлся без противоречий. Способность модели создавать гиперреалистичные видеоролики с изображением реальных людей быстро вызвала критику со стороны голливудских студий и организаций. Ассоциация киноискусства, Disney и Paramount выразили обеспокоенность по поводу нарушения авторских прав.

ByteDance отреагировала введением дополнительных мер безопасности, включая требования к проверке в реальном времени для пользователей, создающих цифровые аватары, и отмену функции, которая могла генерировать голоса только на основе фотографий лиц.

Эти разработки подчеркивают более широкие этические проблемы, стоящие перед всей индустрией создания видео с помощью искусственного интеллекта — проблемы, которые все модели, а не только Seedance 2.0, должны будут решать по мере дальнейшего развития технологии.

Начало работы с созданием видео с помощью искусственного интеллекта

Готовы попробовать Seedance 2.0 самостоятельно? Вот несколько советов, как добиться наилучших результатов:

- Напишите подробные подсказки. Чем конкретнее будет ваше текстовое описание, тем лучше будет результат. Включите подробную информацию о ракурсах камеры, освещении, действиях персонажей и настроении.

- Используйте справочные материалы. Воспользуйтесь преимуществами мультимодального ввода, загрузив справочные изображения, видео или аудио, которые соответствуют вашему творческому замыслу.

- Начните с простого, затем повторяйте. Начните с базовой концепции и используйте функции редактирования и расширения, чтобы усовершенствовать свое видео.

- Экспериментируйте с системой @. Отмечайте различные элементы в приглашении, чтобы обеспечить единообразие символов, стилей и звуков.

Заключение

Seedance 2.0 представляет собой значительный шаг вперед в создании видео с помощью ИИ. Благодаря унифицированной мультимодальной архитектуре, поддержке 4-модального ввода, собственному выходу 2K и совместной генерации аудио и видео он поднял планку возможностей создания контента на основе искусственного интеллекта.

Являетесь ли вы профессиональным режиссером, желающим оптимизировать свой рабочий процесс, или создателем контента, желающим воплотить свои идеи в жизнь, Seedance 2.0 предлагает мощные возможности, которые невозможно было себе представить всего год назад.Поскольку технология продолжает развиваться, мы будем обновлять это руководство с учетом последних событий. А пока, почему бы попробовать Seedance 2.0 бесплатно и посмотреть, что вы сможете создать?

Хотите создавать видеоролики с искусственным интеллектом с помощью Seedance 2.0? Чтобы начать работу, посетите Seedance2Video.io — никаких технических знаний не требуется.